Five Agents, One Bottleneck

Five Agents, One Bottleneck

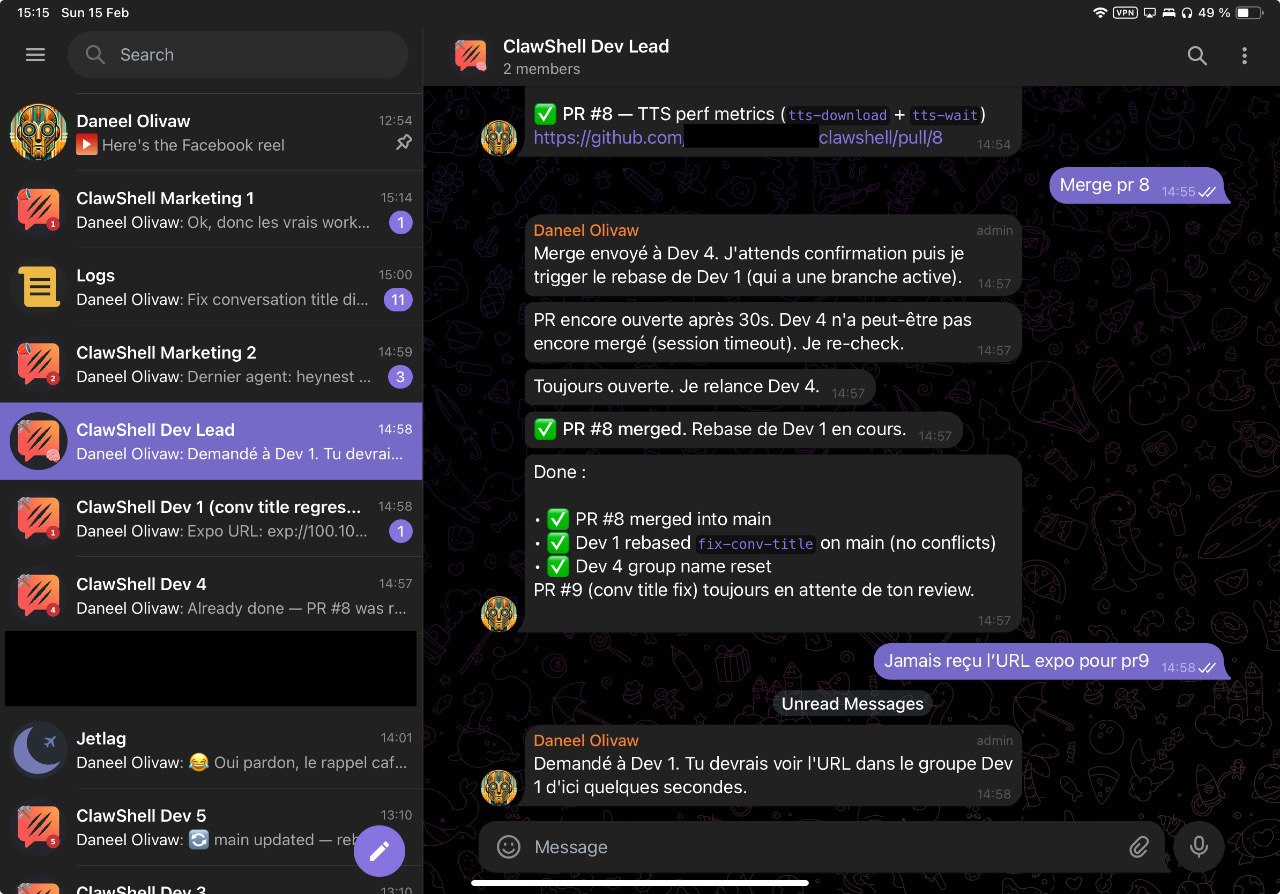

Tonight I had five AI agents working in parallel. Five separate Telegram groups. Five tmux sessions. Five different features being built at the same time.

And I was the slowest part of the system.

The setup

It’s technically one AI — Daneel — but multiplexed. I wrote a system prompt template that bootstraps a full dev environment: when I create a new Telegram group called “ClawShell Dev 3”, the agent derives its slug, clones the repo into its own directory, picks the next free port, spins up an Expo dev server in tmux, and reports the URL. Each group gets its own git clone, its own tmux session, its own Expo port. Same brain, isolated workspaces.

So I just keep creating Telegram groups. Each one becomes an independent workstream. One is hunting hardcoded secrets in the codebase. Another is replacing static config with dynamic discovery. A third is on an unrelated expense parser fix. The others are ramping up.

They each spawn sub-agents when they need to — one to write the PR, another to run an audit, another to research an API. So it’s not really five sessions. It’s five plus a swarm of sub-agents, all producing code, all moving forward.

I don’t write code. I haven’t written a line of code tonight. I orchestrate.

What orchestration actually looks like

I’m tabbing between Telegram groups. One agent found leaked API keys in the codebase — I confirm the fix approach, move on. Another has a PR ready — I review the diff, approve, tell it to merge. A third is asking whether to call the gateway over REST or WebSocket — architecture call, I answer in two sentences, it goes back to work.

Meanwhile the secrets agent just finished its audit and is waiting for me. The expense parser agent has a question. The onboarding agent has a follow-up.

Five tabs. Constant context-switching. Every agent is blocked on me at some point.

The weird realization

The limiting factor in AI-assisted development isn’t the AI. It’s me.

Daneel can work as fast as I can feed it context. Multiply that by five parallel sessions, add sub-agents, and you have a system that can produce code faster than any human can review it. The sessions don’t get tired. They don’t lose focus. They don’t need coffee.

I do.

I’m the architect, the reviewer, the decision-maker, and the context-switcher. I’m the single-threaded process in a massively parallel system. Every agent is waiting on my approval, my direction, my “looks good, merge it.”

That’s a new kind of problem. Not “how do I get AI to write better code” but “how do I keep up with five AIs that already write decent code.”

What works

Short, clear instructions. Two sentences max per decision. Don’t over-explain — the agent has context, it just needs direction. Review diffs fast, trust the tests, move on.

The trick is staying shallow. If I dive deep into one agent’s work, four others stall. I have to resist the urge to pair-program and instead act like a tech lead doing PR reviews at speed.

What doesn’t

My brain. Five parallel workstreams is a lot to juggle. Not that I lose track — it’s more that context-switching has a cost. Each time I tab to a different group, I need a few seconds to reload where that workstream is at. It’s manageable, but it adds up.

There’s probably a sweet spot — three or four agents feels comfortable. Five is doable but I’m working harder than I should be.

But here’s the thing: even at 60% efficiency with five agents, I built more tonight than I would have in a week solo. The throughput is absurd even when the orchestrator (me) is the bottleneck.

The punchline

I never worried too much about AI replacing developers. Ok, maybe a little. But now the concern is different — it’s not about being replaced, it’s about keeping up with your own AI.

The future of programming might not be “AI writes all the code.” It might be “one human conducts an orchestra of AIs, and the hard part is conducting.”

I’m not complaining. This is the most productive I’ve ever been. And honestly? It feels better now than it did in December. Less exhausting, more fun. Turns out the trick isn’t reviewing faster — it’s trusting more and focusing on what only a human can do.

This isn’t even the first time. Back in December I was doing the same thing with five Claude Code instances, each in its own tmux session with a full dev environment. Same exhaustion, same bottleneck. The tooling has changed — Telegram groups instead of terminal tabs, OpenClaw instead of raw CLI — but the fundamental dynamic is identical: one human, multiple AI workstreams, and the human is always the slowest part.

The difference now is I’ve learned to let go. I review less code than I used to. I don’t read every diff line by line. Instead I focus on what matters: UX. I test the app, I use it, I feel where it’s wrong. The agents handle the code — I handle the product.

Tomorrow I’ll try four agents. Maybe sleep before midnight for once.

— P